Gaze – The Missing Input Device for Magical UIs

Eyes are the windows to the soul, right? So far, we have done a really bad job utilizing this information in user interfaces. The next wave of personal computers – Virtual Reality headsets😎 – will use this information to its advantage. What kind of UIs can we design using eye-tracking? How does a gaze-based first-person-shooter look like? And who the hell is King Midas?

😎 I am using term Virtual Reality as an umbrella term for Virtual, Augmented, Mixed, Computer-mediated, Extended Reality. Upcoming VR headsets with eye-tracking: Meta Quest Pro (2022), Pico 4 Pro (2022), PlayStation VR2 (2023), Apple Reality Pro (202?), Valve Deckard (202?), and others.

What is gaze?

We can describe gaze as physiological manifestation of visual attention – it's where the eyes are focused. Focus is not only described by the direction of the eye, but also by the size of the pupil, and curvature of the lens.

You eye muscles control where the eye is facing, i.e. how is rotated. The pupil acts as an aperture and is controlling how much light from the surrounding world comes through. Then the lens focuses incoming light on the retina, where light-sensitive cells detect its presence and the information is transmitted through optic nerve to the brain.

Eye Movement

Your eye muscles can move your eyes in two ways – smoothly and rapidly. When you fixate your attention on a specific moving object, your eyes are smoothly aligning with the object. Same thing happens when you fixate your attention on static object and move your head. You perceive a stable stream of visual information. On the other hand, rapid eye movements, also known as saccades are a bit counter-intuitive.

Go in front of a mirror and look at your left eye and then right, and keep alternating between them. Anything strange? You can redo the experiment, but with a camera-enabled computer that can act as a mirror. With a slight delay the computer has introduced, you can see your saccadic movement. Since saccades are extremely fast, the visual information we would get out of it would be only a blurry image and brain actively suppresses this information. As a result we are practically blind during the saccade.

Eye as a Pointing Device

Since we know how we move our eyes, the obvious thing to do is replace all pointing devices – mouse, trackpad, trackpoint, trackball, 6DOF VR controller, finger pointing, etc. – with our eyes.

Shoot'em All

Let's imagine a simple VR gaze-shooting game – you are in an arena, where small targets are flying around you. A single direct look at the red circle, the target, will shoot a laser beam from your eyes and destroys the target. Suddenly, eye-hand coordination is not necessary, and you know John Wick would not stand a chance against you. Your eyes are extremely fast pointing device and work almost subconsciously.

If you want to put the speed of your eyes into perspective – time of a single saccade is 20ms to 40ms, while reaction time in classic PC shooter using mouse pointer is about 250ms. That is an order of magnitude faster reaction time.

Golden Gaze

This gaze-shooting game would get boring really fast. A competent game designer would suggest to add some distractors – objects with different color, shape and size from our targets. So lets add a slightly larger blue rectangles to our red circles. A direct look at the distractor would score negative points. The game now feels more balanced.

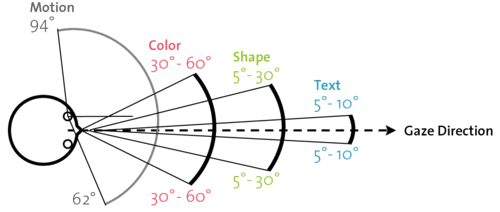

You would think that you would be careful enough to spot distractors with your peripheral vision and avoid them, right? Not really – your peripheral vision sucks. Depending on the distance from the gaze point, the peripheral vision gets blurrier, colorless, and less precise. This is why we need to move our eyes – you spot something interesting but not discernible in your periphery, so you steer your eyes to look directly at it and observe it with much greater acuity.

So if a distractor appears outside of your shape and color recognition area, you can't tell it apart from the target. You just see some blob and to assess its category you have to look at it. Boom! Your laser gaze destroyed it...

This problem is called the Midas Touch effect. King Midas wanted to turn everything he touched into gold, however this unfortunately applied also to his daughter and food. His golden touch turned out to be a curse.

Counteracting Golden Touch with Eyes Only

A simple solution is to trigger the action after some delay. Eye has to be fixated on the target for a while, and then it is time for action. This delay, or dwell time, is usually around 500ms, so this is slower than the mouse-based interactions and don't solve the problem at all.

An interesting solution is dual gaze, which would trigger the action only after an additional confirmation saccade:

In our eye-laser game, you would fixate on the blob (target or distractor) an then an additional little blob would pop up. Looking at it would trigger the laser gaze and would destroy the target. This interaction can be done in less than 100ms, which is twice faster than mouse-based interaction.

And this is how the the real-world interaction with gaze could look like.

Everyone can learn how to operate this interface in less than 2 minutes. Magic.

Counteracting Golden Touch with Non-Ocular Muscles

If we would like to use some other muscles other than eye muscles, there is a ton of solutions. Mouse is back, baby! Any clicking mechanism would do. Or just pinching two fingers. Or eye blink. Or flick of your wrist, or head nod, a clap, a finger snap, a tongue click, or teeth chattering for some weird use cases. But really any muscle would do. Muscles of the pelvic floor being the most fun.

There doesn't have to be only single method suitable for triggering the action. You can mix-and-match techniques together to create unique interactive experiences.

And of course, hands are still there, so you can combine eye gaze with hand gestures. This is a powerful combination and makes eye gaze tracking a very versatile interaction technique.

Eye as a Camera in Virtual Reality

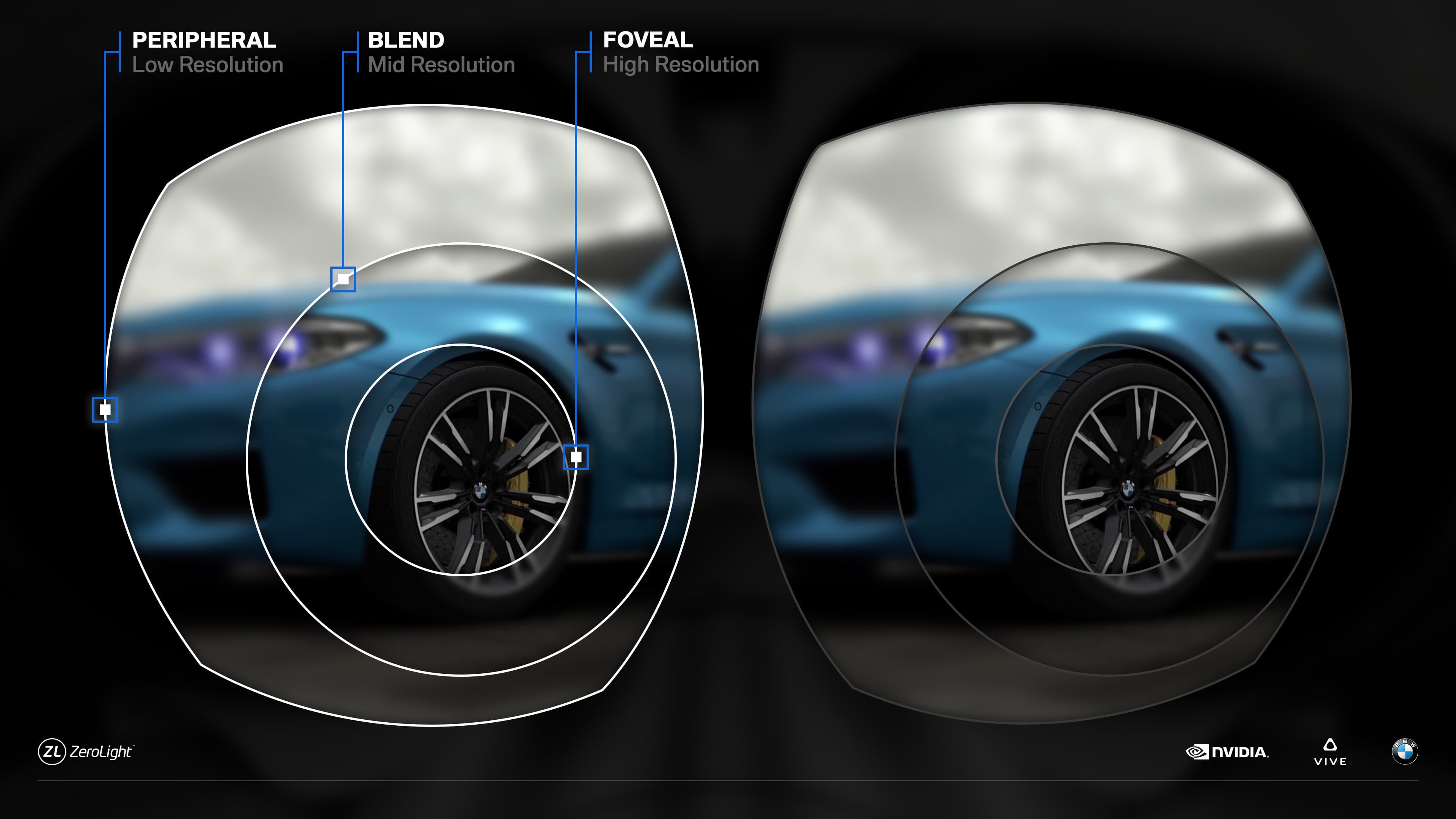

Gaze-tracking can be exploited in optimizing rendering performance. Foveated-rendering is a natural candidate – you render crisp image around gaze point and render progressively worse image at periphery.

Using our knowledge about the eye, we can design this image to be perceptually identical to the fully rendered image, while using just a fraction of computing power.

We can even incorporate information about blinking and active saccade – during these periods we are almost blind, so we can lower computation cost even more. Ever heard of blind spot? We don't have to render anything there.

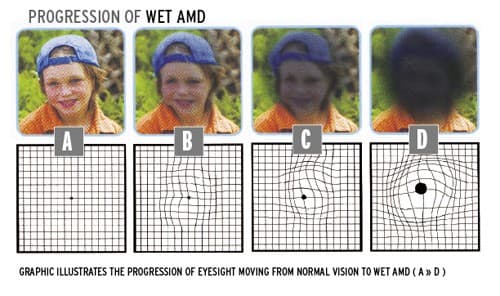

Or we can go further and intentionally degrade image to simulate disabilities. For example, with macular degeneration, you can't really look directly at things because there is not enough information, so you have to look to the sides. Trying out sight loss due to cataracts and glaucomas might be an eye-opening experience for healthy people.

But enhancing the image is probably the most interesting application for rendering. Color-blind people might be able to differentiate between colors they normally can't. Or enhancing healthy human vision to improve performance in some tasks. Also, an entire new class of optical illusions might arise thanks to gaze-optimized rendering.

Social Aspects of Gaze-tracking

Having a face-to-face conversation in Virtual Reality can't be done properly without seeing eyes of the other person. Eyes are the windows to the soul. Collaborating with someone without having signs of a shared attention is a frustrating experience.

Producing visual content that maximizes possibility of a fixation seems like user experience pattern that will be loved by the designers, but hated by the users. Attention-conditioned ads seems like an absolute hell.

Gaze-tracking data can be used to improve deepfakes – we fixate our gaze on out-of-distribution facial features immediately, so we could leverage this information to minimize such features.

Reading might be pretty fruitful exploration topic. As you can currently experience, your eyes are jumping from word to word, subconsciously picking out points where to look next. Sometimes there is a bold text that tells you there is something important ahead of you. If we could minimize distraction during longer reading sessions, we could dramatically improve comprehension of reading material. Dyslexia? Gone.

Writing might see a revolution too. Gaze swiping across a keyboard might turn out to be faster input method than thumb typing. As added bonus, we have our hands free to do important physical stuff.

Gaze Matters

As we have seen, gaze-enabled interfaces can deliver a very radical new ways of interacting with the world around us. Affecting world with just a look feels very magical, but for some it may be the only way to communicate their great ideas.